On Thursday, June 22, I was at the Milan Convention Center at the annual event organized by Amazon Web Services to promote its evolving Cloud services: AWS SUMMIT.

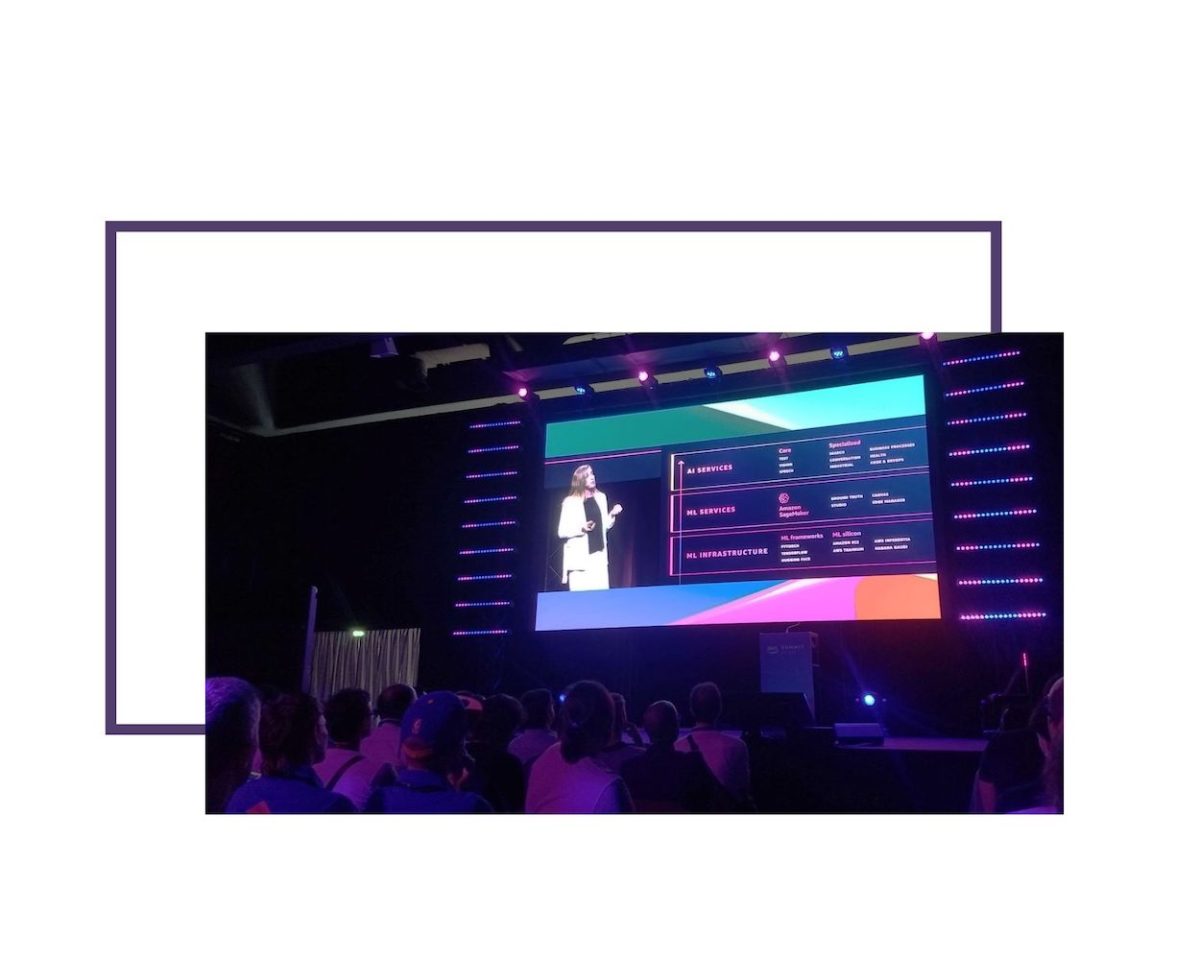

Amidst so much IoT, Servitization, Cloud Computing above the clouds (in space!), and Supercomputing, one service dominated the scene and it was undoubtedly SageMaker, entirely dedicated to the use of Machine Learning in the most diverse domains.

We are no longer talking about experimentations, but success stories, although as of today Gartner estimates that 47 percent of ML projects fail to make it from the Proof Of Concept stage to production.

However, a managed Cloud environment like AWS makes the distinction between POC and product thin: whether the prototype effectively demonstrates feasibility, scaling to handle production workloads is almost immediate.

The Opening Keynote was presented by Holly Mesrobian, VP AWS Serverless Compute, and Julien Groues, Managing Director AWS France & Italy.

Besides a roundup of the latest offerings, they showed some achievements of AWS customers such as Infocert who, due to the opening of the Italian AWS Region in Milan, was able to migrate 2 Petabytes of LegalDoc documents from abroad for regulatory compliance reasons.

Fincantieri is undergoing thorough digitization of its shipyards and the ships it builds with the goal of achieving Net Zero emissions, also optimizing processes through the use of quantum computing.

D-Orbit’s space logistics are also interesting, whose “space vans” connected to AWS Ground Stations may in the future serve as “orbital routers” to create a mesh network in space with Edge Computing functions to reduce the bandwidth used to transmit data from satellites to the ground.

Among a large number of parallel talks available on the agenda, I chose to follow those most relevant to the projects on which I work at Interlogica, which cover two worlds that are rapidly converging: IoT and Machine Learning.

Between sessions, I was also able to visit the exhibition spaces, where ample room was given to DeepRacer, robotic car racers who are trained with data from those playing a simulator (training), and then tested in a circuit independently (inference).

Another interesting demo is the connected soccer table, where video from a simple smartphone is locally processed (on the Edge, without sending the video to the Cloud) analyzing by Machine Learning the movements of players and ball, keeping the score in real-time.

BREAKOUT SESSIONS

INDUSTRIAL IOT AND SERVITIZATION ON AWS: THE DANIELI EXPERIENCE BETWEEN PRESENT AND FUTURE

A rising topic in the Manufacturing Industry is the so-called Servitization. Rather than just selling a product, IoT can be used with new services related to the products themselves–shifting from CapEx (Capital Expenditure is the cost to develop or provide durable assets for the product or system) to OpEx (Operating Expense is the cost required to operate a product) to do so on an ongoing basis.

Out of the most desirable services made possible by new technologies, both Cloud and Edge side, one is Predictive Maintenance.

Data collected through IoT tools are analyzed through Machine Learning models to identify conditions that may lead to a malfunction before it occurs.

Danieli is rolling this concept into its steel plants, where they have implemented Amazon Monitron as an end-to-end monitoring system, from the motion sensor to the user interface that exposes AI-produced projections.

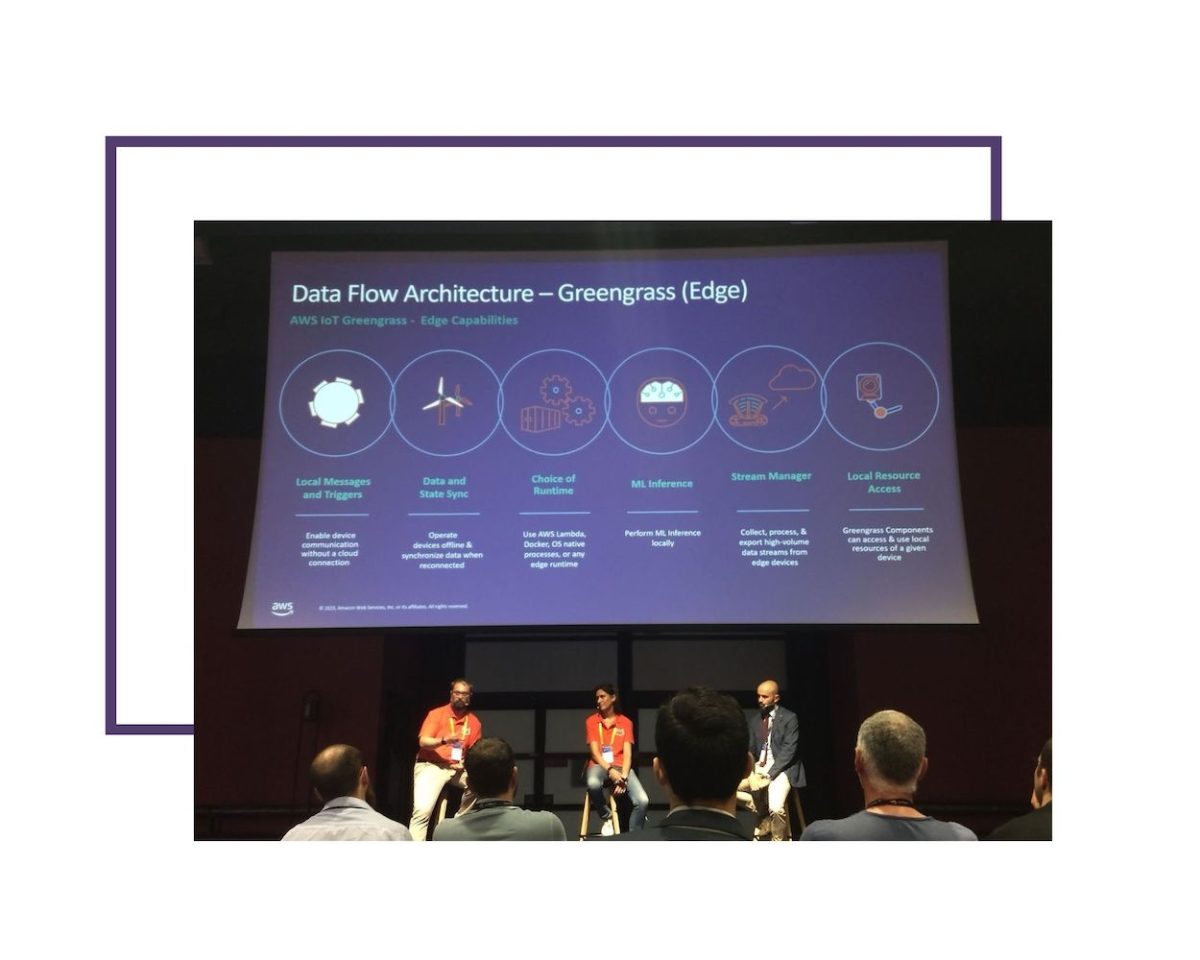

The presence of AWS Greengrass both on the Edge side (in the plant) and in the Cloud allows for seamless distribution of data pre-processing (always necessary for the ML training phase) across the two environments, enabling IoT Sitewise as the interface for remote collection and management of all plant-related information.

LET’S SEE HOW TO MAKE THE MOST OUT OF THE POWER OF GENERATIVE AI

Highly interesting and up-to-date session, reflecting a time when generative AIs such as Stable Diffusion, MidJourney, and ChatGPT gained awareness among the general public, raising a lively debate about the future of work and Society itself.

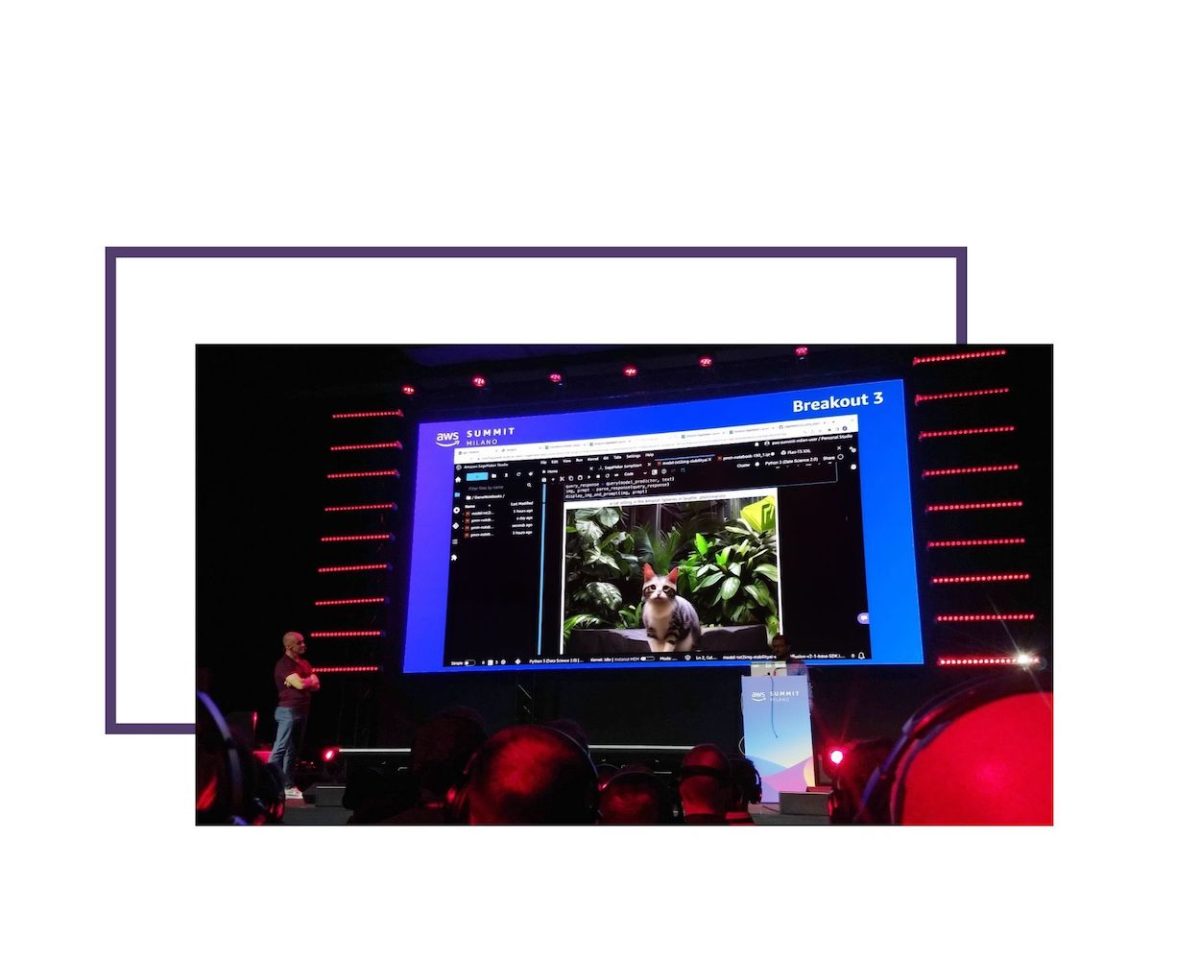

AWS showcased its offerings ranging from dedicated hardware (Trn1n and Inf2 EC2 machines optimized for training and inference, respectively), to Foundation Models, pre-trained, that can be applied to many different data domains, to Bedrock APIs placing a unified interface in front of different models (Foundation or custom, including the famous Stable Diffusion image generator), to the real star of the whole Summit: AWS SageMaker.

SageMaker Playground UI was shown along with the simplicity with which it allows low-cost experimentation with different models to the point of creating production-ready solutions.

Equally interesting was CodeWhisperer, a code prompter for developers that can also detect potential security vulnerabilities in sources in real-time.

AVVALE: AUTOMATED DEFECT RECOGNITION FOR THE MANUFACTURING INDUSTRY

That was an interesting but brief presentation of a solution based on AWS Rekognition for defect detection in the production of technical fabrics. Extremely slow and costly operation when done manually, as now automated and significantly expedited by Computer Vision tools based on Amazon Lookout for Vision.

An ML model is trained to find defects, Rekognition generates the labels to distinguish them, and the resulting model analyzes fabrics as they flow at 60 meters per minute in front of cameras, recognizing defects with 85 to 95 percent accuracy.

HIGH PERFORMANCE COMPUTING INNOVATION WITH FERRARI

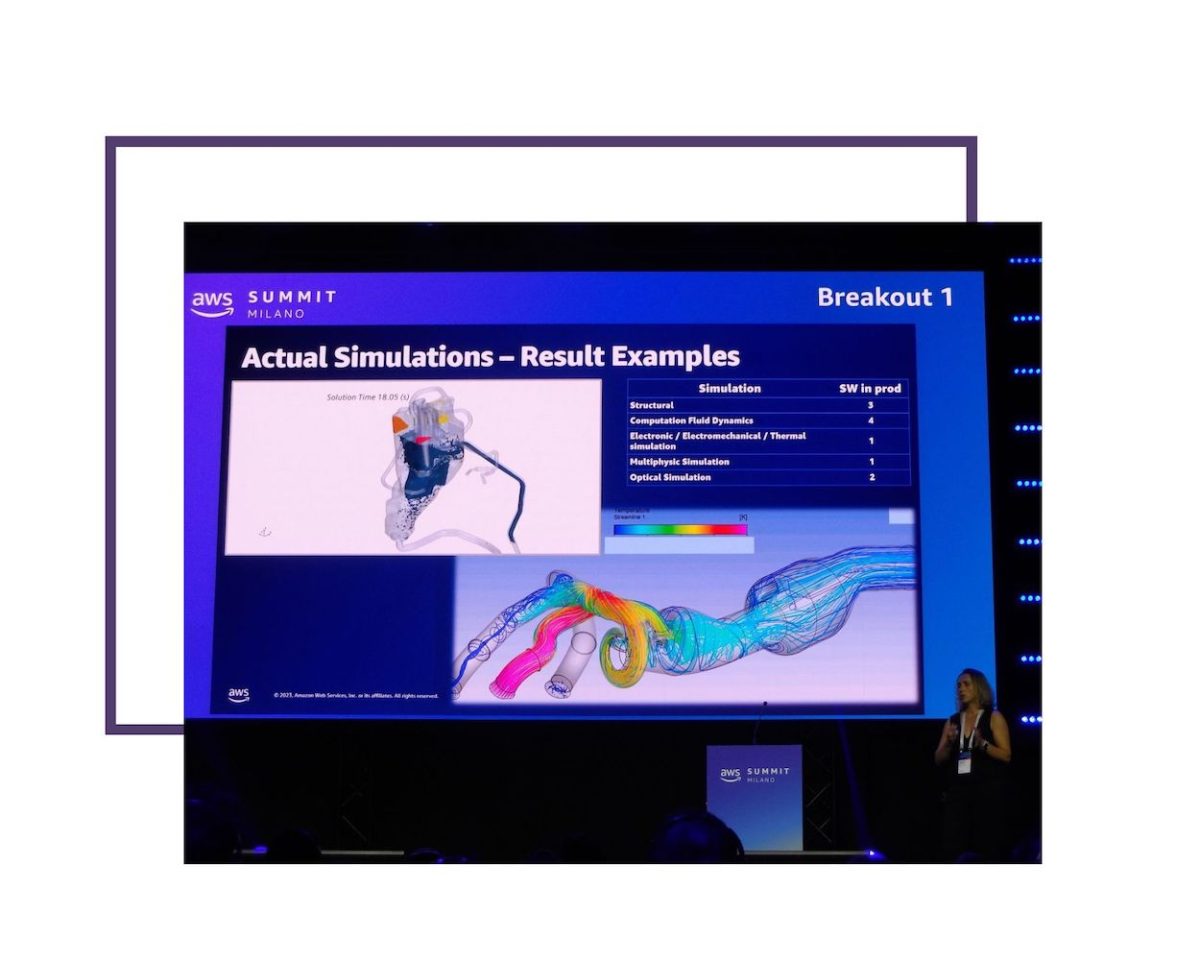

In Maranello, Ferrari has always had a supercomputing center (technically HPC- High Performance Computing) it uses to design its cars for finite element structural calculations, fluid dynamics, and physical simulations of different kinds.

During this talk, they presented how they were able to grow their cluster–which was no longer sufficient for all the jobs (no GPUs, for example) and would be very costly to replace–by connecting it via Direct Connect to AWS Parallel Cluster to make a hybrid architecture.

Cluster on Cloud allows to choose among EC2 machine instances dedicated to different workloads such as Hpc6a (with AMD CPU) for fluid dynamics and weather, Hpc6id (with Intel CPU) for finite elements or seismic, Hpc7g (with Graviton CPU: ARM architecture developed by AWS) for the best price/performance ratio. The Nitro virtualization platform includes an optimized Network layer HW and delivers performance nearly identical to that achievable on physical hardware. Elastic Fabric Adapter provides an interconnect matrix between components that scales almost linearly with the number of cores.

Network connections within the cluster do not make use of TCP, but rather the specially optimized SRD-Scalable Reliable Datagram protocol.

Similarly, the storage system, which is expected to make large volumes of data accessible very quickly, is also optimized specifically for supercomputing: FSx for Lustre exposes a high-performance parallel filesystem on a managed service.

Within this new hybrid configuration, each simulation job is evaluated based on computational and data transfer requirements and sent or split amongst the most appropriate Maranello and Amazon resources.

Achieved execution time savings range from 30% to 70%, dropping as the number of cores increases (but linearly). After several benchmarks, the network bandwidth was upsized 4x and the number of cores increased to achieve better performance in 100% of the use cases.

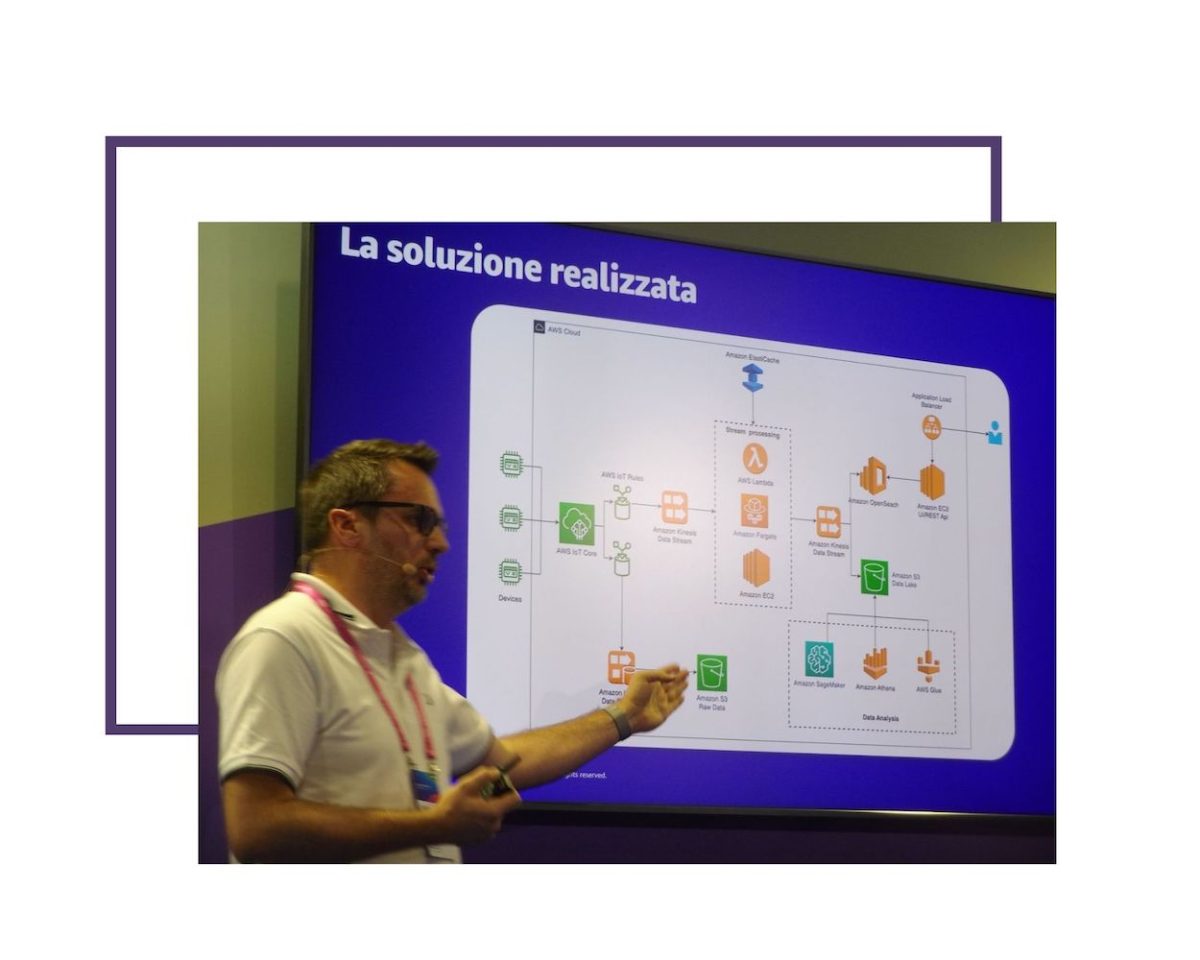

OMNYS, AWS IOT AND SAGEMAKER FOR MANUFACTURING: THE CLIVET EXAMPLE

Circling back to Servitization in the Manufacturing Industry, Omnys led a concise yet dense segment on a case: implementing Machine Learning for Predictive Maintenance, optimization, and monitoring of heat pumps produced by its customer, one of the industry leaders.

There again, ubiquitous SageMaker was embedded in the Kinesis-based IoT pipeline, pulling normalized data from a DataLake on Amazon S3. Every day, 105 million telemetries are processed from installed systems around the world (45 billion of those already logged), and malfunction prediction has reached 80 percent.

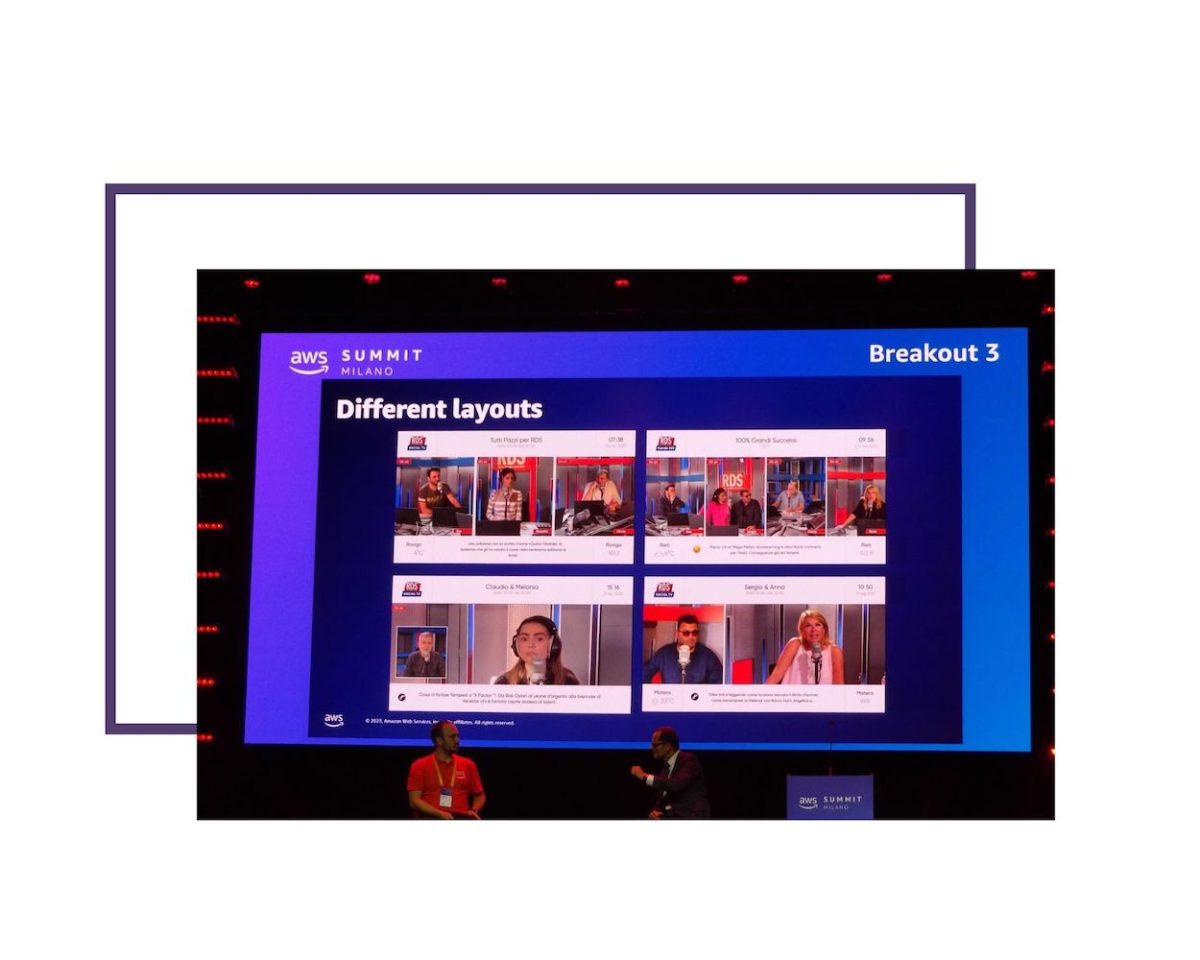

ARTIFICIAL INTELLIGENCE AS FILMMAKER. FIND OUT HOW RADIO DIMENSIONE SUONO STARTED A TV CHANNEL USING AMAZON REKOGNITION

Radio Dimensione Suono was facing major investments in equipment and people as they wanted to launch their SOCIAL TV. Since it was a radio station, they didn’t have cameras, lights, video equipment, directors, cameramen, and so on.

Turns out they didn’t need them as they adopted AWS Rekognition as their “director” and two mere iPhones as cameras.

A custom-developed iOS app streams the video to the Cloud, where faces are localized and recognized, speech transcribed, and shots automatically adjusted based on what is happening.

On top of the economic savings, a close integration with social networks was enabled, allowing streams from remote guests to be included in the “director’s” decisions, and comments from social media to be automatically moderated.

ON HOW TO OPTIMIZE INDUSTRIAL PROCESSES AND PLANT MAINTENANCE BY GENERATING MACHINE LEARNING MODELS WITH AMAZON SAGEMAKER

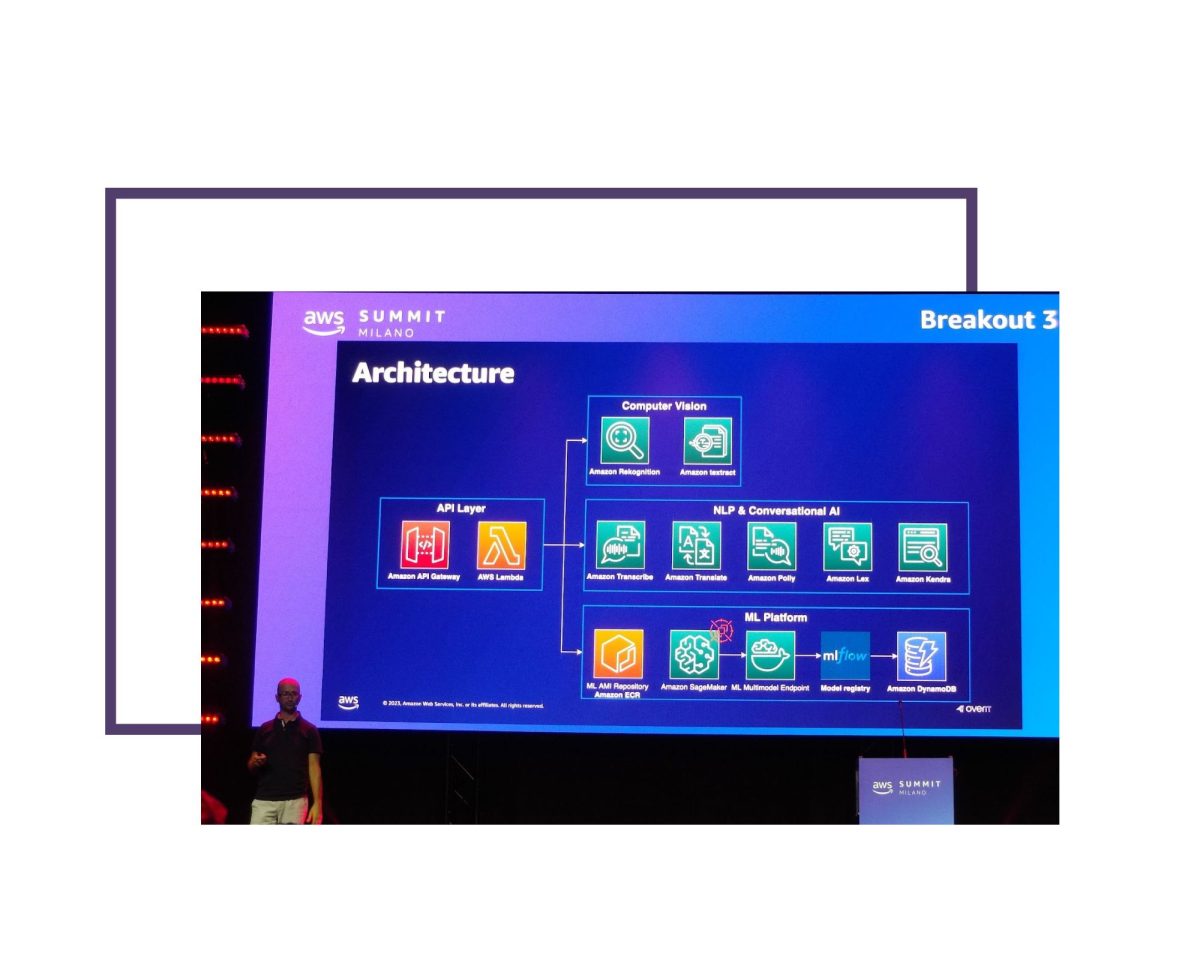

The last session I attended offered two more examples of how Machine Learning is being used for Digital Transformation.

OverIT got started transferring its extensive know-how in Field Service Management (field support) from traditional Operations Research techniques to ML models based on SageMaker.

The goal, again, is to train AI models to enable Predictive Maintenance services to intervene in the field before the problem even occurs.

An interesting use case is the automation of debriefing of operations: using managed services such as Rekognition (capture of paper documents), Transcribe (speech recognition), Translate (translation) and Polly (speech synthesis) the process may occur verbally, leaving the operator’s hands-free, whatever the language, if necessary using augmented reality glasses.

Prima Industrie likewise used SageMaker to enable Predictive Maintenance of its metal laser-cutting machines. Through video analysis of operating equipment, any performance deterioration can be detected.

Facing a choice in the training phase of the model between supervised mode (with human operator classifying inputs) or unsupervised mode (with automatic deviation detection), they tried both approaches, achieving similar results.

They then opted for unsupervised in order to carry out continuous training of the model to grow its accuracy over time as the machines send new data (half a million telemetries per week per machine).

Upon choosing whether to use the model (apply inference) on the Cloud or on the Field, balancing the unlimited scalability of the former with the lower bandwidth utilization of the latter, the adoption of GreenGrass in both domains meant no choice was required. The machines are thus able to self-diagnose using a model that becomes increasingly accurate.

FINDINGS

Beyond everything I focused on, the AWS Summit covered plenty other interesting things on both an operational and business level. Regardless of my choices, however, the overall program of events made it abundantly clear that Artificial Intelligence is becoming increasingly important in all fields, from industry to entertainment to more.

And likely life-changing applications are still to come.

YOU MIGHT ALSO LIKE:

Virtual Reality and Augmented Reality: a step toward the future

Big Data and Intelligenza Artificiale: lesson learnt