After carbon and steel, the basis of the Industrial Revolution, the most important resources in the 21st century are both intangible: energy and information. Of course, Einstein taught us that matter is nothing more than energy in a condensed state. However, it is undeniable that, even in economic terms, Humanity’s attention is more and more focused on less tangible resources. As we shall see, energy and information interact with each other at a much deeper level than it seems.

THE SECOND LAW OF THERMODYNAMICS: THE ARROW OF TIME

Thermodynamics affirm that in an isolated system, energy is neither created nor destroyed, but by acting upon it (namely, by modifying the status of the system’s internal elements) energy is reassigned in order to reduce unevenness.

At a microscopic level, the number of possible uneven statuses among particles is much higher than that of even status.

At a macro-level, this entails that even states are much more likely to happen than uneven ones, implying that each system naturally tends toward more even states: an ice cube melts in a glass, mountains wear away, and stars are consumed.

Even living beings, that consume great amounts of energy to grow and form orderly structures, their bodies, rot away and become amorphous matter, once their energy supply fails them.

This unavoidable advance towards energy balance is the only physical phenomenon that makes the difference between “past” and “future”: all other phenomena can be “rewound” just like a movie. The possibility to recreate an egg starting from an omelet, however, is extremely slim and would require enormous amounts of energy, or alternatively, in order to happen spontaneously, would require a “thermodynamic miracle”. We could say that the so-called “arrow of time”, namely our perception of time “passing” in just one direction, with information flowing from the past towards the future and never vice-versa, is derived from this law, the second law of Thermodynamics.

WHAT DIFFERENTIATES THE OMELET FROM THE FRESH EGG? THE CONCEPT OF ENTROPY.

So, what differentiates an omelet from a fresh egg? The atoms are the same and the total energy level is not that different at all. What’s more, if we cook the egg inside a totally isolated environment, the energy level would remain exactly the same, based on the First Law of Thermodynamics.

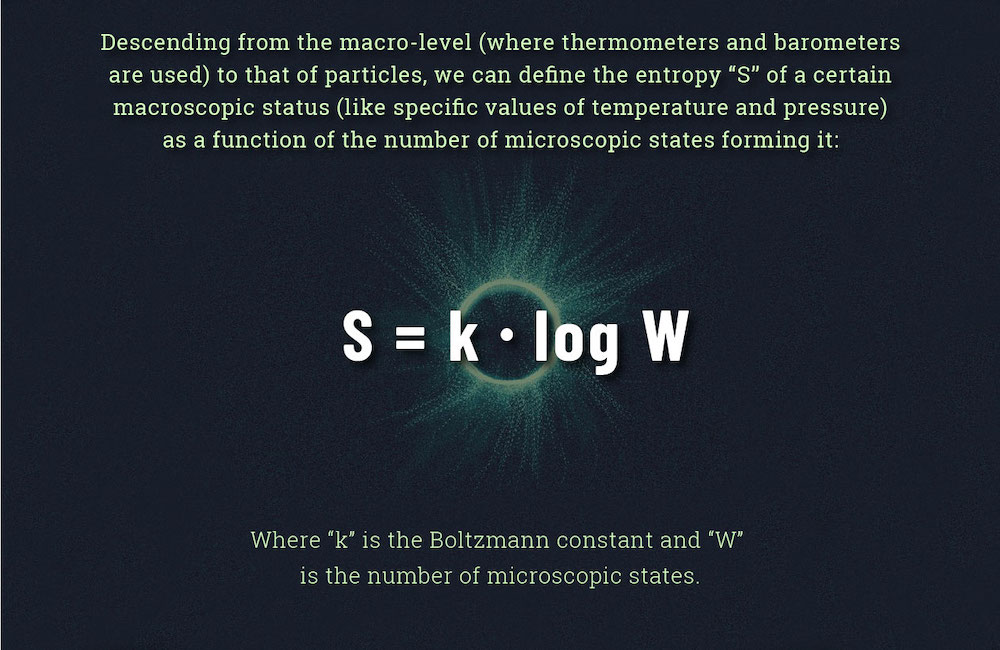

What changes is the property called “entropy”. In 1864, in the midst of the Industrial Revolution, the physicist Rudolf Clausius defined it as the relationship between an increment of heat during a status change and the absolute temperature at which the status changes.

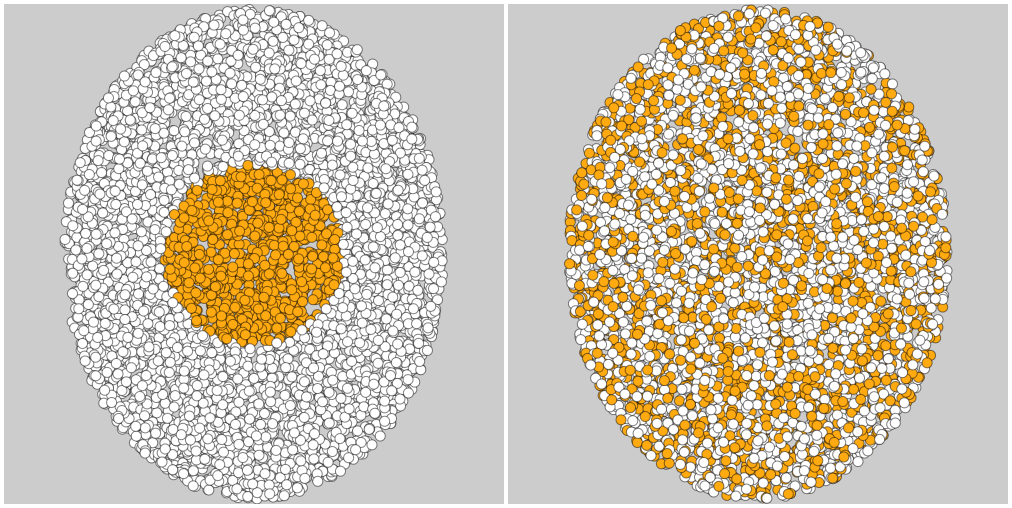

In the egg: the original microscopic status sees all the yolk molecules within a certain radius, while all the egg white molecules are outside of that radius.

In the beaten egg: there’s no distinction between the two areas anymore, thus admitting a higher number of microscopic status in order to be realized.

The result? After we spent energy to beat the egg with a fork, the egg shifted to a higher level of entropy. With all this mixing, we’ve lost the exact position of the single molecules: we’ve lost information.

CLAUDE SHANNON AND THE ENTROPY OF INFORMATION

We cannot quantitatively measure information without the help of Claude Shannon, father of the Information theory, who in 1948 postulated his first theorem.

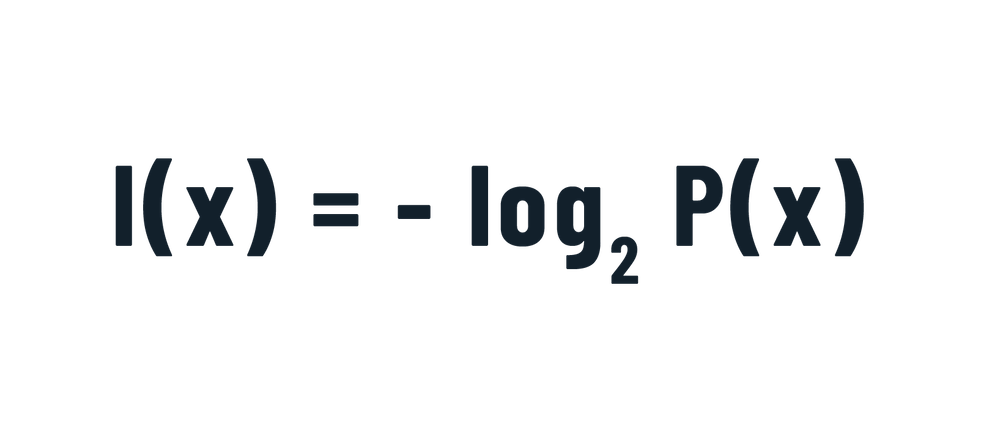

Talking about long-distance communication, the intrinsic information contained in a signal “x” emitted from a source is equivalent to:Where P(x) is the probability of emission of that particular signal amongst all others.

To put it simply, if a light bulb turns on and off randomly, the probability to see that light bulb on at a given time is ½ and the information provided by the observation is equivalent to 1 bit.

What if it was an egg? As we’ve seen, because of the lower number of microscopic states that correspond to it, the “whole egg” observation is less probable than the “beaten egg” observation.

The information linked to that macroscopic status is, therefore, greater than that associated with the “beaten” status, which, in thermodynamic terms, is found at a higher level of entropy.

To summarize, every time energy is used to do something, there’s an entropy increment and the information that can be extracted from the system decreases.

And we cannot go back: the “arrow of time” of the Second Law of Thermodynamics dictates that in a closed system (like the Universe) the overall information can only decrease.

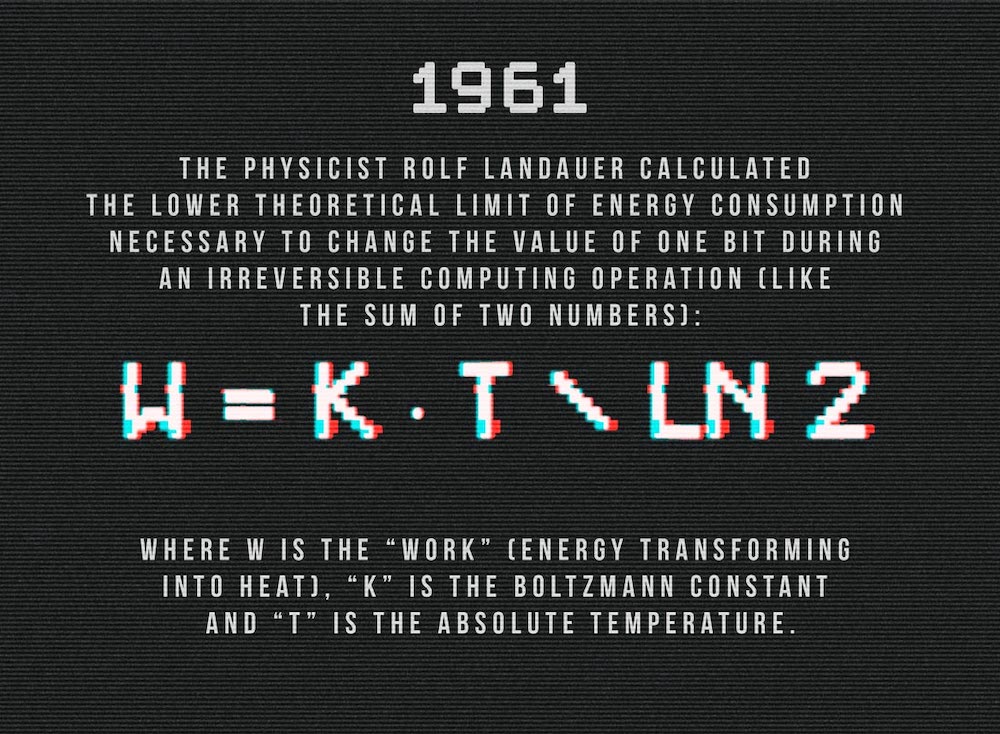

On the other hand, to alter the information status in a closed system, namely to carry out any kind of information processing, energy must be used, and this causes an increase in entropy.

For instance, in order to change one bit at 25°C, it’s not possible to use less than 0.0178 electronvolts. It’s a very small quantity, but this theoretical limit is far beyond what modern calculators are able to do today.

TWO SMALL EXPERIMENTS

There’s a very simple way to obtain a good approximation of the number of the inherent bits of information contained in the description of a status. Modern data compression algorithms are extremely efficient and are close enough to Shannon’s theoretical limits.

Therefore, we can take the description of a scene before and after the variation of entropy and verify, albeit approximately, if the number of bits used to describe it changes.

If we limit ourselves to an analysis of the text, it is obvious that:

“A glass containing 33 ml of water at 10°C”

is much shorter than:

“A glass containing 30 ml of water at 12°C and an ice cube at -4°C”.

Nevertheless, the length of a string isn’t always directly proportional to the amount of information it contains: we can create a many megabytes file containing only thousands of repetitions of the word “hello”. The intrinsic information content of that file, however, won’t be much. A data compression algorithm reveals this aspect by producing a very small file.

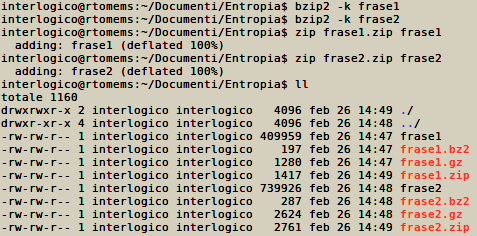

Similarly, by writing the two previous phrases and by repeating them 10000 in two different files called “phrase 1” and “phrase 2”, and by then compressing them with standard ZIP, GZIP, BZIP2 algorithms, we obtain the following sizes for the compressed files:

– The words “frase 1” and “frase 2” contained in the picture refer to the aforementioned “phrase 1” and “phrase 2”

Taking into account some overhead, it’s clear that the longer phrase of the two, which describes a more complex system (like the egg with yolk still intact), after compression will still occupy more space (in bits) than the other one.

By radically changing the method of description, and in a very scientific-less fashion, we can make the same “experiment” with images. The picture showing the low entropy system (the one with the ice cubes), taken in almost exactly the same conditions and compressed in a JPEG format using the same parameters, occupies more bits than that of the high entropy system (after the ice cubes melted):

CONCLUSIONS

Information not only allows us to efficiently manage increasing amounts of energy production, distribution and consumption. It is also a characteristic of reality that is strictly connected to the nature of energy itself.

VUOI APPROFONDIRE L’ARGOMENTO?

Blockchain and Energy, what is happening?