While keeping in mind that “you are not the user”, the best way to create user-centered products is definitely to include the user in the main steps of the project:

- during the analysis and the creation of the design, in order to increase empathy through surveys and questionnaires

- during the testing, in order to assure that the choices that have been made follow the rules of usability and that they are in line with the user’s expectations.

The Usability Testing is the most powerful tool we have to analyze together the concepts of usability and centrality of the user and, far from least, to convey their importance.

The usability testing must be conducted in every single phase of a project and it changes its connotation and purpose accordingly:

- Comparative Usability Testing: it evaluates a design against competitors or of a previous version of it;

- Explorative Usability Testing: it establish what content and functionality a new product should include to meet the needs of its users;

- Usability Evaluation: to ensure it provides a positive user experience, either pre or post-launch.

These tests can be carried out on different supports, based on the results that we aim to obtain and based on the aspects to be evaluated:

- sketches

- flows

- sitemaps

- wireframes

- mockups

- paper models

- interactive prototypes (lo-fi, hi-fi)

- final product

- etc …

In some cases, they are required to be taped, in order to get in-depth analysis and reports, so as to better provide papers and strategies and the post-examination decisions.

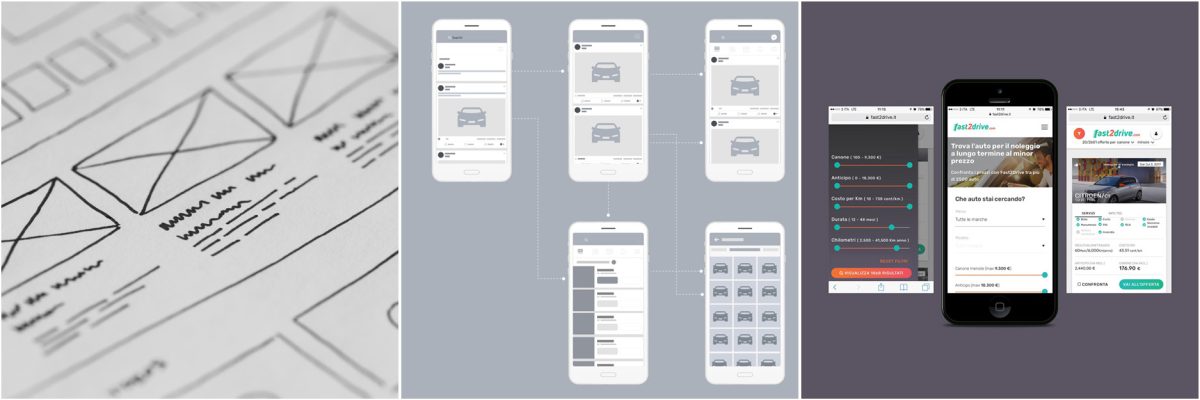

CASE: Fast2drive.com

We have recently conducted a post-launch usability testing on Fast2drive.com, our car comparator for long-term rentals.

The purpose was to verify the behavior of the app on mobile devices, in order to guarantee the best possible experience to the most of our users (according to our Analytics’ data over 60% of the users are from mobiles).

TESTING’S SETTING UP

1) Task

Careful analysis of the system has revealed a series of potential issues that we wanted to analyze, so we have defined a possible user scenario featuring these issues.

2) Recruitment

We then selected the users to evaluate.

Since Fast2drive.com is a public app that doesn’t require a deep understanding of the field, we decided to recruit the participants from Interlogica – being careful to keep a rather heterogeneous range of devices, of knowledge of the contest and of familiarity with technology.

The request for participation has been sent through Workplace, a collaborative platform run by Facebook that Interlogica uses daily with remarkable results.

3) Staging

Since it was our first mobile usability testing, the hardest part was probably the set’s configuration for the audio/video recording process.

After several attempts, we have found the right outcome as follows:

- tripod;

- a smartphone equipped with a software for the streaming recording of the tester’s device;

- PC with Camtasia recording the video from the smartphone, the audio, and the tester.

Having recorded participants’ reactions during the whole testing execution was crucial.

4) Development

The test lasted over two working days and it has involved 4 users and 2 designers. Every session lasted for about 1 hour, organized as follows:

- introduction: a brief unavoidable speech to clarify the aim of the testing, to put the user at ease and to receive the approval for the recording;

- tasks’ execution: to take charge of the activities assigned by the users and to carry these out, while thinking out loud;

- final observations: time for comments on the test and for possible things to clarify and/or for questions to be answered.

The moderator was critical to guarantee the quality and the clarity of the testing. He made sure to moderate the session without interfering with the actions of the tester, to maintain the results of the test as authentic and pure as possible.

CONCLUSIONS

A number of usability’s issues have emerged from this test, mainly concerning low-end devices, among them:

- the comparison interface turned out to be too large and hasn’t allowed the addition of new vehicles;

- the disabled buttons have created confusion for the user, that hasn’t been able to evaluate the state of it;

- the performances, due to the simultaneous tasks, have occasionally slowed down the app.

The test was helpful to start developing the core business of the app and to highlight possible opportunities for the future.

Everything was written in a report where the sessions were described, the criticalities that needed to be solved were categorized and prioritized, the possible improvements were registered and the strategies for the continuation of the work were defined.

This has allowed us to specifically intervene to improve the app and to eliminate the irritating frictions that influenced the user’s experience.